An Interview...

Dr. Louisa Moats – Teaching Teachers to Teach Reading

Dr. Louisa Cook Moats, is Vice President of the International Dyslexia Association and a Consultant Advisor to Sopris West Educational Services. Dr. Moats specializes in the implementation of school-wide interventions for improving literacy. She directed the NICHD Early Reading Interventions Project in Washington, DC and as a Distinguished Visiting Scholar, worked on the California Reading Initiative. She is the author of many books and articles including: Speech to Print: Language Essentials for Teachers, Parenting a Struggling Reader, and LETRS (Language Essentials for Teachers of Reading and Spelling). Additional bio info

Dr. Louisa Cook Moats, is Vice President of the International Dyslexia Association and a Consultant Advisor to Sopris West Educational Services. Dr. Moats specializes in the implementation of school-wide interventions for improving literacy. She directed the NICHD Early Reading Interventions Project in Washington, DC and as a Distinguished Visiting Scholar, worked on the California Reading Initiative. She is the author of many books and articles including: Speech to Print: Language Essentials for Teachers, Parenting a Struggling Reader, and LETRS (Language Essentials for Teachers of Reading and Spelling). Additional bio info

The following transcript of our conversation with Louisa Moats is a compilation of two phone interviews conducted in October and November of 2003. We found Dr. Moats to be a teacher of teachers who is dedicated to improving children's lives by improving their literacy. Her work in neuro-psychology and large scale reading projects has provided her a unique perspective on the social-educational inertia that constrains how teachers and parents think about the challenges involved in learning to read.

The following two videos are excerpts from our later video interview with Dr. Moats:Video: Hollow Platitudes (More on Academic Danger)

Video: The First Millenium Bug (More on The First Millennium Bug)

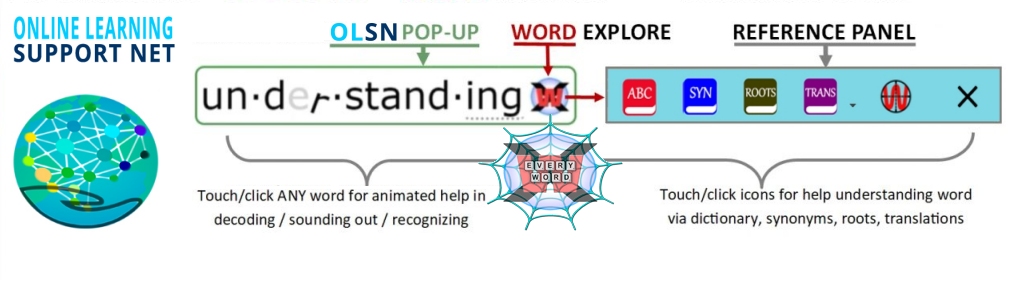

Note: Remember to click on any word on this page to experience the next evolutionary step in technology supported reading.

Note: Remember to click on any word on this page to experience the next evolutionary step in technology supported reading.

-----------------------------

Part 1: October 30, 2003:

David Boulton: It’s a pleasure to speak with you. Let’s start with some background on you. Tell me why you do this work?

Personal Background:

Dr. Louisa Moats: What brought me into the whole field of reading was actually that I started out as a neuro-psychology technician in one of the first neuro-psych labs to be established in Boston in 1966. I graduated from college and my first introduction of learning disabilities was the clients that came to the neurology clinic. I became very interested in brain behavior relationships, particularly with regard to language processes. Then I figured that testing people was not nearly as interesting as trying to treat or teach them, so I got into the first group of federally funded learning disabilities teachers on a full scholarship.

I went to get my license as an LD teacher and in the process of getting that certification I kept thinking, ‘I’m not learning anything of value here.’ I came out of Peabody College with a Master’s degree in learning disabilities and went to work as a teacher and found myself completely befuddled, although I didn’t know enough to know what was missing or what was wrong with what I’d been taught or what I was doing. I just had this terrible feeling that I wasn’t really helping these kids. I was told to fix their learning disabilities, but not to teach them to read.

Then I went on teaching in several different kinds of settings in public and private schools. After about eight years of that and a brief career as a professional musician, I came back to Boston to work in the neuro-psych lab as the educational specialist and was told by the director that I needed to get a doctorate in order to keep my job because the hospital required it. I kind of bumbled into a doctoral program in reading at Harvard where Jean Chall was the departmental professor. It wasn’t a knowledgeable decision on my part to get a doctorate in reading; I just thought it was the next best thing to getting a doctorate in learning disabilities so I might as well sign on.

In the process of doing that, I realized how much I’d been missing in knowing basic disciplines, knowing about linguistics and reading psychology and cognitive psychology. I finally got a very good grounding in those things and very good training in language. I went on after that to be in private practice as a consultant and diagnostician and I got licensed as a psychologist and tested many people when I was living in Vermont. That’s when I got to know Reid Lyon. He was in Vermont at the same time I was and we did a lot of work together for eight or ten years before he went to the National Institute of Child Health and Human Development. He did a lot to educate me about research and evidence and drew me into the research community.

I had started writing a lot and going to NICHD symposia. I was teaching graduate courses to teachers the whole time and then it began to dawn on me through all these experiences that the teachers I was working with, by and large, just had no idea at all about the things I knew about at that point. Their training wasn’t equipping them to help the kids. Most of the clients I had in my clinical practice simply hadn’t been taught well and Reid really got me to understand that what we were facing was a crisis of understanding and implementing what we knew from research.

That was where we were in 1986 and then it took another ten years to even mobilize the National Reading Panel. The two of us were on a speaking circuit for a long time, sometimes together and sometimes going different places. Then Reid got me into working on a California Reading Initiative for a year. I’ve always taken these assignments from him. He said, “You have to go to California, they’re starting this new reading initiative, they need you out there Louisa.”

In California I learned a lot about what it takes at the policy level to change anything in education and teacher education. I was working with the leaders of the California Reading Initiative and got to know Marion Joseph. I was working with the people who got all of the Packard money to start the coach training. I did that for a year and we really had a big impact and I learned a lot about state politics.

Then came the opportunity, to go to Washington to direct a five year study of reading instruction and reading development in inner city schools. The project was funded by the University of Texas and headed by Barbara Foorman. I got the job of running the D.C. site of that study. Barbara was in Houston with Jack Fletcher and Dave Francis and we worked on this as a team. I’m still writing up data from that study. It was tremendously informative and it was extremely difficult work because the schools had so many problems and were so dysfunctional.

Nevertheless, in the course of the four years that we stuck it out in D.C. we were able to bring these kids to the average range through intensive professional development for the teachers and equipping them with comprehensive reading programs. We put coaches in the classrooms and had within our project a very consistent, research based approach. In the better functioning schools the kids were above average.

I came out of that experience knowing that the district was totally disinterested in anything we were doing; there was no follow through, there was no support from the district to carry on any of the work we did. All the schools are back where they were. Two years later I got a call from the Assistant Superintendent saying, “We need your help here.” At that point I just thought to myself, no, I’m not going to do that unless something structural changes in leadership because it’s impossible to get information and practices into the classroom without the kind of coordinated effort that has gone on in Los Angeles and a few other districts that we can cite as being the kind of sites for implementation that have made a difference.

Congressional Testimony and The Design of 'Reading First':

Dr. Louisa Moats: When I was in Washington I testified to Congress three times. I had several visits from the President in our classrooms and I had lots of photo opportunity sessions with policy makers who wanted to appear as if they were doing something about this problem. They also wanted to know more about what was going on in the schools that were successful.

I was one of the people in the formulation of the Reading First Initiative. My thing there has been the professional development blueprint. Now I am working primarily on professional development. I am writing a lot of materials and conducting institutes, myself and with a team of people for mainly states that want to include my approach in the Reading First Initiative or in a statewide Reading Initiative. I am still seeing how all of this unfolds.

Knowledge Gulf:

Dr. Louisa Moats: Everyday I’m in a school and working with teachers I continue to be astounded by the gulf of knowledge, the gulf between our knowledge base in the scientific community and the practices that go on in teacher training.

I participated in the WETA Reading Rockets and that was really good. We just need so much more public awareness and mobilization of public interest and understanding.

David Boulton: That’s what we hope to help happen here. I appreciate your story, it’s very helpful. A number of people that have wound up in this space together seem to have gone through a similar route, sometimes career progression, like getting the doctorate, and sometimes through a process of jumping across disciplines.

Inertial Resistance:

David Boulton: I get the sense that you’ve gone from the micro-time processing dynamics on the inside of the brain involved in doing this, to understanding the instructional paradigm inertia and the social educational challenge.

Dr. Louisa Moats: Yes.

David Boulton: That really is what is retarding our progress. There’s a knowledge gulf, but that sounds passive. There’s almost an actual active inertia resistance on the part of the entrenched systems.

Dr. Louisa Moats: Yes.

David Boulton: We need to get underneath the polarities that underlie this resistance.

Dr. Louisa Moats: Yes. I agree.

David Boulton: I think your passion is pretty implicit in all of this, but I would like to give you a chance to speak to that for a moment. I appreciate the story of how you’ve moved through this learning journey that has allowed you to develop an intelligence and knowledge in so many related domains, but what drives you?

Tragedy of Reading Failure:

Dr. Louisa Moats: What drives me? It is the whole realization of the difference between what is and what could be for kid’s lives and seeing first hand, at every level of society, every age group, how reading difficulty effects people for life. Then on the other end of things, seeing at every level what is not happening that could happen to prepare teachers to address the needs of so many individuals who could benefit from informed instruction and who don’t get it because our whole educational system from start to finish is simply not set up to ensure that people do learn to read.

My subtext here is that they learn competence in their own language, which is an even broader issue. As I work in high poverty schools and with second language kids, I find it more and more difficult to talk only about reading when for me reading is so inextricably associated with oral language use, comprehension, and with writing.

This morning I am analyzing writing samples from our D.C. study and seeing the evidence in front of me that these kids whom we taught to read well enough so they score in the average range are never going to make it in life because they can’t write a grammatical sentence with a past tense ending on it. They’ve gotten through fourth grade without anybody teaching them in any kind of systematic way how to handle simple syntax, complex syntax, and standard word usage. They simply aren’t going to make it because I know that they’ve gone too long without anybody doing anything.

If you take these kids at the fifth grade level and give them sporadic, incidental feedback about errors, it’s not going to prepare them for what they’ll face in life. These kids will not make it into higher education; they’re not going to make it into jobs that require writing, even if they can read at a basic level. It’s a tragedy. It’s a tragedy and it’s a treatable one.

Lack of Adequate Teacher Preparation:

Dr. Louisa Moats: On the rare days when I lose my passion about this, I lose the conviction that anyone will ever be able to do anything about it because I see so little effective movement in the selection of teachers. I see so few people willing to stand up and say you can’t have a non-verbal teacher teaching reading to first graders. There are so few people who are willing to set standards and to talk about what a teacher should really know and to hold any teacher candidates to some kind of a standard. There are so many excuses and so many forces aligned against the adequate preparation and nurturance of teachers.

On some days I just feel overwhelmed and as if nothing is really going to move here and by the time I die I’m not going to see… it’s hopeless. I just throw up my hands and think forget public education, just be a supporter of charter schools or something else because it’s just… Those days are rare. I would say I have about two days a month like that.

David Boulton: I recently interviewed a leader of a reading organization and was flabbergasted at the suggestion that the problem really wasn’t about children learning their way through the code, that children should be relying on other kinds of guessing strategies. My jaw dropped.

Dr. Louisa Moats: Yes, but yet I find that still, the way things are now, that is the most commonly held belief.

David Boulton: That’s what we’ve got to get at. Why is it that people hold this belief? What is it that underpins this belief? What you’re saying and what I’m hearing everywhere that I go is that we’ve got this crisis – and we can come back and talk about writing; no question they’re related and they’re both artificial code processing skills – but they’re distinct in terms of the processing complexity and in terms of speed. With reading, the virtual assembly of the projected reading stream has to happen much faster than in writing, so they’re distinctly different, though related.

All But Fating:

David Boulton: However, relative to reading, everybody seems to agree that most of our children are to some degree having difficulty with it. It’s all but fating their lives.

Dr. Louisa Moats: Right.

David Boulton: According to Reid Lyon and James Wendorf, ninety-five percent of the children that are struggling with reading are instructional casualties.

Dr. Louisa Moats: Yes.

David Boulton: It’s a consequence of an unnatural, overwhelming ambiguity forced upon the child while nobody is giving them a stairway through it before they shame-out to the process. The shame itself then impedes their cognitive ability to process it, as well as diminishes their self-esteem in general with all of its transferred effects.

So we have this massive problem that when we cut it down has to do with the social-educational paradigm-inertia.

Dr. Louisa Moats: And why is that the case? You’re extremely articulate about it. I’ve tried to reflect on this a lot.

The following video excerpt is from our later video interview with Dr. Moats:

Video: Confusion>Shame (More on Shame: The Dark Heart of Reading Difficulties)

Reading is Rocket Science:

Dr. Louisa Moats: There’s this monograph, I don’t know if you’ve seen it called, ‘Teaching Reading is Rocket Science.’

David Boulton: Why do you call it rocket science?

Dr. Louisa Moats: We are never going to get anywhere if our approach is teachers ought to be able to do this simple thing called teaching reading. I think the rocket science of all this has to do with the requirement of a good teacher to understand what is involved in kids learning the alphabetic code and that requires meta-linguistic awareness that speech sounds are different from letters and that speech sound processing is a requirement and underpinning for learning the code. I find that that’s kind of counter intuitive. People don’t get that. They don’t think about it, they don’t know what we’re talking about.

One of the most common findings in my studies of teacher knowledge and teacher proficiencies is that even experienced teachers of reading really do not know speech sounds. If I ask them a simple thing like how many speech sounds are in the word ‘no’ and I spell it k-n-o-w, they’ll tell me there’s four speech sounds in the word because they don’t know how to separate their own knowledge of orthography from a specific awareness of speech sounds. Because we’re wired up to process phonology at an automatic level and extract the meaning from speech, there’s no requirement for the average person to be meta-linguistically aware at the phonological level.

David Boulton: Phonemic awareness, as it’s normally discussed, is an artifact of the code processing required here, not a consequence of the natural, oral language processing human beings have.

Dr. Louisa Moats: Yes. It’s artifactual knowledge that has to be acquired and reflected upon in order for a teacher to be able to teach intentionally and to read the behavior of the kids to make decisions about whether they are getting it or not, to forge that pathway even if you put a very good program in their hands.

David Boulton: When you describe it as knowledge, doesn’t that in a way somewhat sidetrack us? We’re talking about developing faster than conscious processing reflexes. We’re talking about active processes that we can describe with a map-like overlay of knowledge. However, it isn’t effectively just knowledge of this that will actually translate into processing it this way.

Dr. Louisa Moats: Knowledge is the first step and then there’s a whole repertoire of behaviors involved in teaching that are actually very easy to mess up. I say to the teachers, ‘You know this looks simple, but the minute you get up here to role play what I’m talking about…’ Just a simple thing like segmenting speech sounds and using L-tone and boxes to do that – I hardly know any teachers that know how to do that. There’s so many ways to mess it up that comes from a misunderstanding of the objective and of the activity and the place of the activity in the developmental sequence of learning that the child has to be guided through.

So there is a knowledge base for teachers, first of all, that is the beginning point for them. The knowledge of the structure of their own language, knowledge of what we’re talking about when we talk about phonemes and phonology and orthography, knowledge of how the writing system is structured so it doesn’t seem like a mysterious mess to them. They communicate to kids the confidence that the spelling of any word can be explained, and that there is a path through all of this. Then there’s this whole repertoire of skills in delivering the instruction and giving corrective feedback that requires an awful lot of coaching and modeling and supervised practice for people to really become effective at it. The more involved the kids are, the more challenging it becomes.

What I’ve found is if I can just get to first base with the average teacher out there, I’m not going to get to a highly sophisticated level, but if I put a good program in their hands they will get better results than they were getting before if I can just get them to do some basic things that are fairly rudimentary. They are not experts, but they can get somewhere that they weren’t getting before. Then it takes, for the ones who really get good at this, several years or more. That is what I mean by the rocket science.

Science vs. Whole Language Theory:

David Boulton: Good, that’s helpful. Let’s back up to a high level summary of this. Again, we’re saying that most teachers don’t understand the code and how the brain learns to process this code into this simulated or articulated speech stream, and that in the absence of understanding that, and you could argue to some degree in some shame avoidance about that, there’s this clinging to an almost religious like explanation to this.

Dr. Louisa Moats: Yes, and wrong-headed explanations that seem to have some face validity in some other principles of learning and teaching that are important, like literature is valuable and enriching background knowledge.

David Boulton: Yes and being meaning centric and recognizing that cognition doesn’t do anything without affect and that the affect of interest is really the channel that good learning happens in.

Dr. Louisa Moats: Yes. Then I think we could characterize it from some of those other theories that have intuitive appeal. If you don’t understand language processing and code acquisition then you’re going to be easy prey for people who come along with whole language theories because they seem to make intuitive sense and you won’t really know why they don’t make sense.

How Do We Help People Understand the Code:

David Boulton: Right. So the question is how do we create a new reference ground for how teachers and parents think about this reading process and must we go through the complexity of ‘rocket science’ before they can have this new ground that can dispel all of these intuitively convenient models?

Dr. Louisa Moats: I think there is a threshold of understanding that you would want to achieve with a program like this. If people get beyond that threshold of understanding… It’s the point in my workshop where somebody sits on the edge of their chair and says, ‘The light bulbs are going off! The light bulbs are going off!’ Then I know that they are going to pursue their own learning in the right direction because once people get this fundamental insight into what we’re talking about, then they don’t go back.

David Boulton: Yes, they don’t need to be pushed, they need to be resourced as they pull their way into it.

Dr. Louisa Moats: They do. So what makes the light bulbs go off? I think it’s a combination of things. My strategies are first of all to give them experiences with phonological processing that are apparently simple tasks that they mess up and that are ambiguous if they don’t know how to think about the phonological system. In a workshop my strategy is this: we start counting speech sounds and in a room of forty people I’ll get a bunch of different answers to a simple question. Then I’ll show I can do that ten times in a row. Next I say, ‘Wait a minute, I’m asking you how many speech sounds are in a one syllable word and I’ve done this ten times and I have not gotten the same answer from everyone in the room once. What’s going on here?’ So we start with that.

Then I do a simulation of a learning to read exercise where I use novel symbols for phonemes and ask them to go through the process of learning the association of sounds to these symbols and then the process of blending them together. They experience speech-sound confusion and difficulty remembering sound-symbol links. When they have to be applying those associations in decoding they start to mess up and experience being disfluent. They experience word confusions from words that look similar and at slow processing speed. Then I kind of bait them into telling me that I’m going too fast, I put too much in the lesson at once, I’m not giving them enough practice and please don’t give them anything new until they have these eight speech sounds under their belt.

I put them right in the shoes of the learners and then we talk about what the role of context is in all of this – how much did that help. They come up with the basic principles of reading acquisition from the learner’s perspective and I don’t have to tell them everything, it comes out of the group. Then I show films of eye movement studies so they understand the physiology of scanning text.

Another thing I do is talk about the history of the invention of the alphabet in relation to how long humans have had oral language, how long it took them to invent written language systems and the alphabetic systems as the latest developing skill. We talk about how it’s logical in looking at the data on writing systems that we’re just not wired up to do this very easily.

I point out all the statistics on how hard it is for people to learn it. Then I teach them explicitly about the speech sounds and the symbols that we use to spell them and for most people it’s a total ‘aha.’ I’ve never had anyone tell me it’s irrelevant. I’ve had thousands of people tell me they should have learned this in their licensing process. I have people telling me for the first time that they understand, that the code system makes some sense. They never knew how to explain these things to their kids. Some of it is just giving people information that also helps them see what we’re talking about.

The last thing I do, I have twelve days of models on this, but the last sort of entry level experience is to put in front of them the writings of kids that have spellings that are explainable through phonological analysis, rather than through some kind of visual orthographic analysis. Through this experience they see that what I’m talking about is directly relevant to an explanation of kid’s behavior.

David Boulton: Yes, and that the kid’s behavior is quite natural and we’re asking them to do something unnatural in relation to that.

Dr. Louisa Moats: Yes.

Children of the Code Overview:

David Boulton: Excellent. We track very well.

The first part of the series is really to put the code in perspective. We’re going to go from the beginning of the alphabet and the different theories of its origin, to its spread around the world, its effect on the planet, its ‘operating system’ like enablement of the Greek and the Roman civilizations. The evolution of Ietters, the Greek vowels, the Roman introduction of punctuation, the spread of the Latin written system by the Romans and its collision with languages that had more sounds than it could represent, particularly of course, English.

Dr. Louisa Moats: Right.

David Boulton: We will explore how it is that the letter-sound relationships evolved from the days of Plato, who said, ‘Once we knew the letters of the alphabet we could read,’ and on up through the phonetic correspondence erosion that we call the First Millennium Bug, where while nobody’s minding the store, this strange pairing system started to evolve in order to compensate for the fact that reading could no longer cue speech in the way it could when the code better matched the spoken languages of the Greek and Romans.

We intend to focus a lot on the added unnatural challenge to the brain of buffering (holding in working memory while processing) this ambiguous code and working it out in time for it to move at the speed of speech.

We talked about the unnaturalness a bit; I really appreciate what you said about the rocket science. I also believe it is critical that parents and teachers begin to understand where this code came from and what a relatively recent, in evolutionary terms, invention it is. And, like you mentioned, that it has no neurologically adapted wiring to run it; it’s all dependant on external learning. Few realize that by virtue of a series of accidents over the past thousand years, with no one ever concerning themselves with how easy this was to read for young children, (until very recently anyway), this thing has developed its own social-inertia.

Basic vs. Proficiency:

David Boulton: Let’s discuss basic and proficiency. There’s a lot of conversation out there about which of these are important to understand to use as a benchmark in talking about the dimensions of the reading problem. There are also different interpretations of what is the actual definition of these two descriptions. Do you have anything you can say about the distinction between basic and proficiency and what they mean to you?

Dr. Louisa Moats: Tell me the distinction again.

David Boulton: Between being basically able to read and being proficient in reading. Right now, in the 2002 NAEP report, they’re saying eighty-eight percent of African American fourth grade children are below proficient and the average overall is sixty-four percent below proficient. What does that mean?

Dr. Louisa Moats: I think virtually it means that they avoid reading as adults and simply do not look to text as a source of information. I don’t make this public a lot, but one of the astonishing phenomenon that I encountered when I was conducting research in the Washington D.C. school system was that the adults I was working with, who often were products of that school system, who may themselves have never gotten beyond a fourth grade level but got through school somehow, they never read memos, they never sent memos, they never used the internet. The only way we ever got anything done was if I personally went around and talked to people face to face and reminded them of when we had meetings.

Reading Aversions:

David Boulton: They were reading adverse.

Dr. Louisa Moats: Reading adverse, yes. That’s a good way of putting it.

David Boulton: I just had a conversation with a senior consultant to the Senate of a major state. I was discussing the concern for proficiency. His pushback was that the only real concern to the state, when it came to understanding the dimension of the reading problem, was the ‘below basic’ level.

It is as if there’s this failure to understand that basic just represents a limited instrumental ability to use the code but not one that’s sufficiently ecological or efficient to engender wanting to use it when you don’t ‘have to’ – to open it up.

Dr. Louisa Moats: Yes. Well put. I’d love to have that whole can of worms open up for public discussion. As it is there’s no discussion about it.

David Boulton: Well, it’s so critical. The relationship between being able to process the code at the basic level and at the proficient level is the speed and low bandwidth consumption of the processing infrastructure that has formed to do this.

Dr. Louisa Moats: Yes.

David Boulton: The difference between basic and proficiency are both outgrowths of the early infrastructural development of the code processing system inside the human brain. Do you support that?

Dr. Louisa Moats: Yes, very much so.

David Boulton: That is a real missing ingredient because everyone focuses on the basic and drops concern for the proficient like it’s not a big deal. It seems to me that achieving proficiency is the big deal because that’s where the most of us are having a problem.

Dr. Louisa Moats: Yes, it is. I think it would be great to remind people what the reading habits of the American public are and are not. There are good surveys of that: how many people actually read newspapers, how many people actually use reading in their lives and what kind of reading they do. Those are hard things to get a hold of.

One of the reasons the Washington School District was so hard to help was that it was staffed by people who do not read and who are not even aware of the resources available to them in that city because they don’t read. It was a very interesting social education for me.

David Boulton: Thank you for all the great work you’re doing. Together I think we really can make a difference and we’ve just got to.

Dr. Louisa Moats: Yes, well I love your ideas. I look forward to talking with you some more.

David Boulton: Thank you Dr. Moats.

Part 2: November 19, 2003:

David Boulton: Hello Dr. Moats. We had a really good conversation last time and now I’d like to continue our discussion.

Dr. Louisa Moats: Yes, I’ve been thinking a lot about our exchanges.

Instructional Confusion:

Dr. Louisa Moats: In the couple of weeks since we spoke I’ve had several more experiences that have made me think a lot about what we were talking about before. One is that I finished the analysis of a group of writing samples from fourth graders in our D.C./Houston interventions project and presented preliminary results on this at the International Dyslexia Association meeting. I also attended the IDA meeting and I’ve been a board member and a participant in those meetings for about twenty-five years. First of all, it strikes me how slowly things change in terms of the awareness of educators and how hard it has been for all the scientific work that is represented at that meeting to actually have an impact on what goes on in classrooms. That was apparent all over again from all the discussions.

I sat in on a session that a colleague of mine was doing on phoneme grapheme mapping. She has designed an instructional procedure for phoneme grapheme mapping which is a nice little supplement for teachers. In the workshop I saw the same thing happening that I see all the time when I’m working with teachers which is the rather profound confusion that exists even among people who have degrees and certificates in reading instruction – how many aspects of the code are unclear to them and they go merrily along teaching their programs and teaching kids without ever resolving the questions that come up in a formal presentation. I saw this colleague of mine leading them through the phoneme grapheme mapping exercises and it was fun for me to see somebody else encountering the same questions and areas of confusion in the teacher audience that I experience all of the time.

The universality of those confusions was impressed on me again and how totally oblivious the teacher certification process is to equipping teachers with that knowledge base. Schools typically don’t take it on as a responsibility; they typically don’t teach it very well if they teach it at all. The instruction is not well conceived even if it is there. People leave those certification programs with the responsibility of teaching kids but without the tools to really make any of these English code issues clear to kids.

Adult Centric Thinking About the Code:

David Boulton: I think you’re right on the button and I want to draw out one of the distinctions I see that is missing here. There’s a huge difference between someone like Dr. Venezky — someone who is a specialist in orthography and the history of our spelling system and who can stand on the other side of learning it all and make a map of the patterns, and the child who is struggling to learn to read. Patterns analysis is an entirely different business than helping children up a stairway through the confusions in a way that is relevant to their experience of it – which can’t loop through such a map.

It seems to me that the teachers and reading professionals in general are operating more on the Venezky side of this. They are on the other side of going through all of this and then looking back at the code with the knowledge of computer science and pattern analysis which enables them to bring to it an abstract, meta-linguistic analysis. But that is way beyond what the children can bring to it at the time that they’re confused with it.

Helping Teachers Understand the Code:

Dr. Louisa Moats: Yes, but I think there are some very systematic, methodical, and successful approaches to teaching the code to children. They don’t resolve all of the problems and issues but as far as phoneme grapheme mapping goes – that is just the first step in the process. What I find is seldom taught but could be taught and should be taught is the idea of layers of orthography: the history of language, the history of English and the base layer of Anglo Saxon and its representational pattern versus the Latin layer that was incorporated later and its representational pattern. Especially the relationship between spelling and morphology, then Greek derived words used in math and science that have their own structures.

When teachers are lead to understand how we come by the orthographic patterns that we have in modern printed English and look at the layering over the last thousand years or so and then also consider the units of representation in terms of phoneme grapheme correspondence and written syllable patterns and morphemes and morphological units then the whole menu of possibilities of what should be taught to kids over their elementary career becomes clear and people can understand that basic phonics is very limited. Basic phonics does not unlock the code for kids beyond a sort of unsatisfying level beyond just sound–symbol correspondence. It doesn’t teach them anything about the patterns that have something to do with word history and word meaning and the word’s grammatical role.

However, I find that all that is teachable, and that once teachers view their language from that perspective, they learn something about morphology, which is a huge missing piece in their own education. I can’t tell you how many times I’ve worked with teachers who have no clue about what a Latin root is or a prefix or why the stress patterns are as they are and how they change and derive the words. Once they’re taught that they can see that they can address the code issue or word study at that level from the base issue of phoneme grapheme correspondence. If the kid has that they can address the issue of reading by syllable chunks which I see as a stepping stone into morphology – which is where you want to be and the way you want to teach kids about word structure and the relationship between spoken and written language from about fourth grade on.

The teachers get no instruction in this at all in their teacher preparation. If they get anything it is basic phonics. But once they are enlightened and they’re shown some good instructional programs they can take kids an awful lot farther and they recognize it. This job of studying print to speech relationship is one that all teachers should share certainly through out the kids’ elementary years and when we get to older kids who are poor readers in high school or adults, this kind of word study is relevant for them as well.

That is where I’m finding that I’m unlocking some insights for teachers. The goal of teacher preparation that I’m involved in is to have the teachers leave the training with a conviction that the spelling of any word can be explained in some logical way. There are very few words whose spellings make no sense what so ever. I really have trouble thinking of any and I know if I go to dictionary and look at where the word came from and its language of origin and when it came into English and how the spelling has changed over time and how it used to be pronounced, there can be pretty good coverage of why that word is in the dictionary the way it is now.

Code Negligence:

David Boulton: Yes, and I can understand how it is helpful to the adults from a teaching point of view to give them some structure to understand all of this variation. And yet it’s a long circuit to be helpful to the children.

If we go back to the days of Plato, reading was a matter of scanning letters which had very definitive sound correspondences. Seeing the letter – you said the sound – if you saw and said the letters together fast enough you were reading. The situation we’ve got today is no longer that case and we’ve gotten between then and now through a series of accidents. Whether it’s Venezky or Naomi Baron or Tom Cable or John Fisher or other linguists or historians of the code – they are all saying yes, the Latin writing system was confused with the English spoken system in a way that nobody was paying any real attention to.

While on the one hand we can, after the fact, go back and explain that this part came from the Greek philosophers and this came from the Dutch, and this came because Caxton didn’t have enough type – because it cost too much money to make a new font in the 1600’s… The story of how the orthography comes to be the way it is is a story of general negligence – a lack of concern for the ecology of processing the orthography and the challenges that children would go through learning it. It was for an elite group by an elite group that was not concerned with the code as a social technological interface for the mass of humanity.

So while we can make certain sense of it, like you said spelling can be explained if you dig deep enough, to understand why it’s confusing we have to loop all the way through abstract knowledge of it as a code, its history, and the various influences and forces that shaped it. However, it doesn’t necessarily help the children who are struggling through the confusion to know all that.

How Do We Create an On-Ramp:

David Boulton: The question is how do we create an on-ramp that is responsive to the kinds of confusions the child is actually experiencing? I don’t think they experience the confusions in terms of vowels and consonants, in terms of morphology. They don’t yet have enough knowledge of those structures to relate to their confusions in that way. They have grown up with a certain level of oral language facility and with a certain beginning set of associations between the sound system and the letters that is their take off point. How do we engage them from there and then step them through and up into reading?

Dr. Louisa Moats: I think there is a path through the code that is pretty darn effective. What they need to learn at different age levels and stages of reading development corresponds very well to Linnea Ehri’s ideas about the acquisition of word recognition and the continual acquisition of spelling knowledge. I think the kids’ errors are diagnostic of where the confusions lie.

As an example, when we just analyzed the fourth grade writing samples the biggest category of error in the spontaneous writing was on inflectional endings: past tense, plurals, i-n-g. Those errors and confusions I think of as actually morpho-phonological, not just having to do with lack of development of morphological awareness. The problem is influenced by the fact that these kids leave consonants off of the endings of words anyway at the phonological level. But the confusions were striking and remarkable, whereas, just at the level of sound-symbol representation the errors were fewer and less striking meaning to me that the kids had learned basic phonics fairly well except for the few who were still afflicted with real problems in that area. They were acquiring habits for sight vocabulary for high frequency words but they just have a very poorly developed sense. There were also interesting errors on other suffixes, not just inflections.

I think there is an order to the acquisition of orthographic knowledge that informs instruction quite well. It doesn’t solve the problem altogether because there are certainly kids who still struggle even if the instruction is very good, their brains aren’t processing the information very well. But on the whole I believe there is such a thing as spelling instructional level. There is certainly a reading instructional level that can be defined in terms of the correspondence units that kids know and don’t know and those progress from individual phoneme grapheme correspondences to conditional patterns and one syllable words to syllable representations in the orthography when you end up with a double letter and what that’s doing there. All of those things are teachable. Then on to morphology – there is an order in all of this I think. I wouldn’t know what to do if I didn’t think otherwise. It would just be a big hodge-podge.

Buffering a Complex System:

David Boulton: Right, which is a dangerous convenience. I understand what you’re saying and one of the things I’m trying to get a handle on is that this conversation of phonics, of morphology, of the kinds of confusions you’re referring to, and writing and spelling as it relates to prefixes and suffixes – what we’re describing, if we step back, is that there’s this complex, multi-layer system that has ambiguities that interact across all these different layers at once.

Dr. Louisa Moats: Yes.

David Boulton: This is much more complicated than the decoder in a DVD or CD player. This is much more complicated than the decoder that knows how to display a web page in terms of the number of looping exceptions that are going on between different layers. Do I know it’s live or live or read or read? I can’t know that from the inside of the code. I can only get that in the context of its application.

So these different loops all have to be buffered up in brain. Nothing in evolution ever did anything like that before. I see this as an incredible challenge then I ask the question: How much of this huge field of complexity and all of our work about it is a consequence of the lack of ecology in the code?

I know a lot of people come right here and dismiss thinking further, ‘there’s nothing we can do about the code so there’s no use thinking there’. That’s the edge I want to push up against. I am not an advocate for spelling reform or changing the alphabet.

Dr. Louisa Moats: Yeah, I was going to ask you. I don’t know where you would be headed with this.

Spelling Reform Attempts - Roosevelt in 1906:

Spelling Reform Attempts – Roosevelt in 1906:

David Boulton: I don’t know if you are aware of the Theodore Roosevelt incident in 1906?

Dr. Louisa Moats: No.

David Boulton: A quick version of this part of the story, which I think you’ll get a kick out of.

– After the King James Bible was released, when mass literacy started to to develop, people were saying: kids know the letters but they can’t read – what’s going on? – Phonics developed in the 1600’s in response to that.

– Later, Ben Franklin comes to believe that the alphabet needs to be repaired. He designs his own version of an alphabet and publishes it.

– Noah Webster gets involved when Franklin’s alphabet doesn’t go anywhere and begins the process of American spelling reform. Webster only accomplishes about fifty word-spelling simplifications.

-These two believe that fixing the orthography so that it has a different processing ecology, to use our language today, is right up there with designing the Constitution in that it affects so many people’s minds – the national intelligence, how long it takes children to read – all of this. But they don’t get anywhere.

– In the 1880’s Melvil Dewey, the man that creates the (Dewey) Decimal system for the libraries, thinks that one of the biggest problem facing the national intelligence of the country is spelling – our orthography.

– Dewey manages to get together a round table of hundreds of top people including Charles Darwin and the University Presidents of many major Universities in England and America, the Commissioner of Education in the United States, Supreme Court Justices, publishers of the magazines, dictionaries, newspapers, encyclopedias – they all come together and they work out a system to adjust English spelling. Later the list would include Mark Twain, H.G. Wells, William James and many other luminaries. Because they recognize that Franklin and Webster were unsuccessful in trying to advocate change the way they did, they come up with a multi-generational plan of introducing a small number of spellings each year via the dictionaries, the magazines, and the newspapers to shift the interior users, the power users of the language into these spellings. They believe it’s both important to language imperialism, which is the core of imperialism, and they believe it’s important in terms of reducing the amount of time and fall out in the education system that is responsible for huge social inequities.

-In 1906 one of the secretaries in charge of all of this happens to be a friend of Theodore Roosevelt. He tells Roosevelt about this in the White House one day and Roosevelt, who was ashamed of his spelling skills, really got excited about it. He took their list and forwarded it to the U.S. Office of Government Printing and said you will now print everything this way.

– It caused a stir, a fury that ended up embroiling Congress and the Supreme Court where Congress actually passed a resolution forbidding the President or anybody from changing the spelling of anything other than that accepted in standard dictionaries.

Dr. Louisa Moats: That is amazing! I have never heard that before!

David Boulton: There are a number of stories like this that are fantastic. We have teased out the stories of so many characters throughout history that have tried to change the code, that have seen that the processing ecology of this code is affecting the entire world.

Andrew Carnegie put up almost a quarter of a million dollars in support of spelling reform. He believed the reforms would help reduce the social inequities associated with illiteracy. When the newspaper got wind of Roosevelt’s attempt to change the spelling he was crucified in the press. The main thing that stopped the whole process was that the newspapers reported that the whole thing was just a scheme financed by Carnegie so he could get richer selling the new books that would be required.

Dr. Louisa Moats: Oh gosh! (laughing)

David Boulton: So Teddy Roosevelt’s exuberance, his shame about spelling and his charge up the hill to fix it derailed a program that could have changed forever how we all read today.

Dr. Louisa Moats: Isn’t that interesting. Do you have copies of text written the way they proposed?

David Boulton. Yes. Frankly, there’s still the Simplified Spelling Society today, a world-wide organization that roots back into the time that I’m talking about. They have competing orthographies that they’re working out – trying to test and get approved.

Dr. Louisa Moats: There’s no one that seems to be winning favor.

Institutional Inertia:

David Boulton: There’s some that are preferred inside their circles. But the problem is that, among other reasons, it is not in the best interests of the institutions and people that are successful and powerful inside the orthography as it is. Why should they go through all of the time, trouble, and the planned obsolescence of the vast libraries of things the way they are to shift the system?

The institutional inertia that resists change here is phenomenal. In fact, Charles Hockett, once said that changing a written language is more difficult than changing a religion. It’s that deep.

Dr. Louisa Moats: Well, I would think so. I’m not surprised that the world has reacted in that way. Just think how much would have to change. And yet as you portray this story I think you have got to be careful to avoid having people think it is so difficult and hopeless that the way it is taught is irrelevant.

David Boulton: I don’t think that at all. In fact, I think the closer we get to understanding it for what it is – the history of the orthography, the fact that there was nobody minding the store, that this is a technological mess – that ultimately our reading problem is a technological interface problem – the closer we get to it the more that all that we have been learning about learning to read plugs in.

What you have been describing in terms of the difficulty of getting the teacher and parent community across the line is that the code is such a confusing mystery that unless you go the route of studying orthography and all these other complex pattern mappings it doesn’t make any sense. How can we make it make more immediate sense to people as a kind of inner reference ground for having the conversation about learning to read?

I appreciate your distinction. I am not trying to say the code is so confusing that it is hopeless or anything like that. In fact, what I’m finding is that the more we get into the different classes and kinds of ambiguity in the code, the more clear it is how all these various teaching solutions and instructional systems can plug in.

Spelling vs Reading:

Dr. Louisa Moats: Good. I would hope that that would be the case. As I’m listening to you I’m thinking that there is a remarkable and very interesting difference between how well people can learn to read and how well they can learn to spell. I find that this is one of the areas of educational endeavor that is simply not discussed or people have such primitive ideas about the act of spelling – the act of learning how to spell and why it is difficult and what it means when people have difficulties with it.

We actually find in our studies that the better we teach reading the more likely it is, in the average population, that kids will get to be quite proficient in reading, but remain much poorer spellers than they are readers. I did my dissertation on spelling errors years ago and that’s when I got really interested in all of this – trying to explain to people why learning to spell is much more difficult than learning to read. I hope that you address that topic some where in all of this or if you don’t I hope somebody does.

David Boulton: Yes. When I say Children of the Code it goes both ways here. I think the main thing is reading because we’ve got the most support for that right now in terms of the interest level. But there’s no question that both of these things are artificial code processes. Both of these things are steeped in unnatural kinds of ambiguity that the child must learn to work out.

The primary difference and the distinction that I hang the two conversations on is that, relative to reading – the construction of the virtually heard or actually spoken stream has to be assembled faster than the child’s mind can volitionally participate in. They can stop reading and work the sound out, but in order to read and to have any fluency they can’t be conscious of it. Whereas, with working out spelling, it is a conscious, volitional participation during the learning process. They are both dealing with the code and its various ambiguities relative to sound-symbol correspondences but one of them is dealing with it in an entirely different time frame in terms of brain processes than the other.

Dr. Louisa Moats: Yes, the spelling requires a detailed recall of all the letters at a level not required by word recognition.

David Boulton: It does have some parallels when where talking about a word that I don’t yet know – when I’m trying to sound out a word.

Dr. Louisa Moats: Yes.

David Boulton: The very edge of our reading difficulty is sounding out words we don’t visually recognize. Good visual pattern recognition (whole word recognition) is built up by being able to sound out the words that we don’t already know.

Dr. Louisa Moats: Yes, but in spelling, the most common errors that adults make have to do with whether or not there is a double letter in the word and of course you can resolve all those ambiguities if you know a lot about morphology and word origin. That can be a help if you know that the word aggressive is a prefix plus a root and you have some idea that you come up with two G’s in the beginning. It is not guesswork, but most people don’t have that level of word knowledge and they are just trying to remember how many letters there are and whether there is a double letter or not. I think it’s an interesting thing to look at the corpus of spelling errors in average population and where they tend to occur.

David Boulton: Naomi Baron did some work on this and showed the remarkable lack of spelling errors in college student’s instant messaging. That’s an interesting tangent to the research in this space. She wrote a book called Alphabet to Email : How Written English Evolved and Where It’s Heading.

Dr. Louisa Moats: I haven’t seen that.

It All Comes Down to a Code:

David Boulton: What we’re trying to say is this code problem is causing more long- term life harm to our population than parental abuse, accidents, and every other kind of developmental disorder combined.

Dr. Louisa Moats: I would certainly agree with that.

David Boulton: It’s costing us more than all of the wars we’re engaged in combined.

Dr. Louisa Moats: Well, that is a great statistic.

David Boulton: It all boils down to an antiquated technology that nobody has been overseeing. How can we re-conceptualize our relationship with it? Without having to fight against all the inertia, I think there’s an entirely different relationship that is possible without changing the alphabet, without changing the spelling, that focuses on helping children move through these layers of ambiguity. It begins with us understanding these layers of ambiguity in relation to the kinds of experiences children are having – not our adult-formed, adult-centric, models.

Dr. Louisa Moats: That’s a wonderful thesis and it’s just so refreshing to hear you articulate that because I guess I’m in total agreement with it. I have not really heard people articulate it as clearly as you have.

David Boulton: Thank you. To the extent that you’re interested in continuing this conversation, I’d like to loop you in to different pieces of it as it emerges and get your feedback and comments.

Dr. Louisa Moats: Okay. I’d be delighted. I have maybe a position of expertise in relation to the instructional piece of it. I am certainly not a Richard Venezky. But I love what you’re doing and I’d love to participate.

David Boulton: Relative to your comment about Venezky – we had probably an hour or so on camera working back and forth and back and forth which finally came to our difference. His view is that yes, everything in the system makes sense. Having spent twenty years in computer science and trying to develop a pattern analysis system to reconcile all of this – which I totally agree with and understand. But the point isn’t what we can understand on the other side of it. It’s what are the confusions the children are experiencing before they get through it. The closer we get to that, the better bridge we can build.

Dr. Louisa Moats: Yes.

David Boulton: There’s another dimension to this that we haven’t had a chance to touch on. I’d like to have one more conversation and talk about assessments and how to assess for code processing errors.

Dr. Louisa Moats: Okay. I’d love to talk with you. My job today is putting together a module for teacher training on assessment – that is exactly what I am working on. It’s a real bear – what to assess, what to make of students’ responses. It’s very interesting.

David Boulton: How can we peer into the child’s confusion? The miraculous intersection is that the closer we get to their actual confusions the better that we can meet them. And the more aware they become of their confusions and the more aware they are that they can develop strategies to work through those confusions the better off they are. That’s the space I want to explore. Thank you so much.

Dr. Louisa Moats: Thank you.